通过kubeadm部署Kubernetes

Kubeadm 是一个提供了 kubeadm init 和 kubeadm join 的工具, 作为创建 Kubernetes 集群的 “快捷途径” 的最佳实践。

kubeadm 通过执行必要的操作来启动和运行最小可用集群。

github-kubeadm

kubeadm官方安装文档

安装步骤大致分为以下4步

1 2 3 4 5 6 7 8 9 10 11 $ yum install docker-ce docker-ce-cli containerd.io docker-compose-plugin -y $ yum install kubelet kubeadm kubectl -y $ kubeadm init $ kubeadm join <Master节点的IP和端口> --token <token> --discovery-token-ca-cert-hash <hash >

安装Docker环境

参考 CentOS7安装Docker

安装kubeadm

官方文档

使用 kubeadm 的第一步,是在机器上手动安装 kubeadm、kubelet 和 kubectl 这三个二进制文件。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 $ cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF $ sudo setenforce 0 $ sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config $ yum list kubeadm --showduplicates -y | sort -r $ yum install -y ipvsadm kubeadm-1.21.0-0 kubelet-1.21.0-0 kubectl-1.21.0-0 --disableexcludes=kubernetes

配置 cgroup 驱动 由于 kubeadm 把 kubelet 视为一个系统服务来管理,所以对基于 kubeadm 的安装, 我们推荐使用 systemd 驱动,不推荐 cgroupfs 驱动 。

在官网关于容器运行时的介绍 中有说明:Cgroup 驱动程序是控制组用来约束分配给进程的资源。

令容器运行时和 kubelet 使用 systemd 作为 cgroup 驱动,以此使系统更为稳定。 对于 Docker, 设置 native.cgroupdriver=systemd 选项 。

kubeadm 支持在执行 kubeadm init 时,传递一个 KubeletConfiguration 结构体。 KubeletConfiguration 包含 cgroupDriver 字段,可用于控制 kubelet 的 cgroup 驱动。

通过kubeadm init初始化集群控制台 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 $ kubeadm config images list --kubernetes-version=v1.21.12 k8s.gcr.io/kube-apiserver:v1.21.12 k8s.gcr.io/kube-controller-manager:v1.21.12 k8s.gcr.io/kube-scheduler:v1.21.12 k8s.gcr.io/kube-proxy:v1.21.12 k8s.gcr.io/pause:3.4.1 k8s.gcr.io/etcd:3.4.13-0 k8s.gcr.io/coredns/coredns:v1.8.0 $ kubeadm config images pull --kubernetes-version=v1.21.12 --image-repository=registry.aliyuncs.com/google_containers [config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.21.12 [config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.21.12 [config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.21.12 [config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.21.12 [config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.4.1 [config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.4.13-0 failed to pull image "registry.aliyuncs.com/google_containers/coredns/coredns:v1.8.0" : output: Error response from daemon: pull access denied for registry.aliyuncs.com/google_containers/coredns/coredns, repository does not exist or may require 'docker login' : denied: requested access to the resource is denied , error: exit status 1 To see the stack trace of this error execute with --v=5 or higher $ docker pull coredns/coredns:1.8.0 $ docker tag coredns/coredns:1.8.0 registry.aliyuncs.com/google_containers/coredns/coredns:v1.8.0 $ docker rmi coredns/coredns:1.8.0 $ kubeadm init --kubernetes-version=v1.21.12 --image-repository=registry.aliyuncs.com/google_containers ...... Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME /.kube sudo cp -i /etc/kubernetes/admin.conf $HOME /.kube/config sudo chown $(id -u):$(id -g) $HOME /.kube/config Alternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.conf You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 172.20.197.138:6443 --token 5wlp13.mkgeq6uma3zw5xif \ --discovery-token-ca-cert-hash sha256:3a1352c0829af0fc410db8e0a259d4d8c5c60e8c3d7527a6c2b04056d49698fe $ mkdir -p $HOME /.kube $ cp -i /etc/kubernetes/admin.conf $HOME /.kube/config $ chown $(id -u):$(id -g) $HOME /.kube/config $ sudo systemctl enable --now kubelet

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 $ kubectl get all -A NAMESPACE NAME READY STATUS RESTARTS AGE kube-system pod/coredns-545d6fc579-596q8 0/1 Pending 0 8m42s kube-system pod/coredns-545d6fc579-7q4qh 0/1 Pending 0 8m42s kube-system pod/etcd-aliyun-172-20-197-138 1/1 Running 0 8m50s kube-system pod/kube-apiserver-aliyun-172-20-197-138 1/1 Running 0 8m50s kube-system pod/kube-controller-manager-aliyun-172-20-197-138 1/1 Running 0 8m50s kube-system pod/kube-proxy-mbf4m 1/1 Running 0 8m43s kube-system pod/kube-scheduler-aliyun-172-20-197-138 1/1 Running 0 8m50s NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE default service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 8m59s kube-system service/kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 8m56s NAMESPACE NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE kube-system daemonset.apps/kube-proxy 1 1 1 1 1 kubernetes.io/os=linux 8m56s NAMESPACE NAME READY UP-TO-DATE AVAILABLE AGE kube-system deployment.apps/coredns 0/2 2 0 8m56s NAMESPACE NAME DESIRED CURRENT READY AGE kube-system replicaset.apps/coredns-545d6fc579 2 2 0 8m42s

安装完成后,可以尝试执行kubectl top node

工作节点加入集群

官方文档 - kubeadm join

在工作节点执行kubeadm join把工作节点加入集群(只在工作节点跑)

1 2 $ kubeadm join 172.20.197.138:6443 --token 5wlp13.mkgeq6uma3zw5xif \ --discovery-token-ca-cert-hash sha256:3a1352c0829af0fc410db8e0a259d4d8c5c60e8c3d7527a6c2b04056d49698fe

如果token不存在或已过期,可以生成新的token

1 2 3 4 5 6 7 $ kubeadm token list TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS rcpn9t.6oby6fnuupuqcv9a <forever> <never> authentication,signing <none> system:bootstrappers:kubeadm:default-node-token $ kubeadm token create --ttl 0 --print-join-command kubeadm join 10.10.183.175:6443 --token rcpn9t.6oby6fnuupuqcv9a --discovery-token-ca-cert-hash sha256:dcd35653ff4534e082628a8562201a573d3d350fbed9a9d828b89e2fb28d704c

如果不记得discovery-token-ca-cert-hash值,可以在master上执行以下指令获取

1 2 $ echo "sha256:" $(openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //' ) sha256:dcd35653ff4534e082628a8562201a573d3d350fbed9a9d828b89e2fb28d704c

安装metrics-server

github - metrices-server

1 $ kubectl apply -f https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/high-availability.yaml

安装网络插件

安装网络插件,否则 node 是 NotReady 状态(主节点跑)

1 $ kubectl apply -f https://projectcalico.docs.tigera.io/v3.23/manifests/calico.yaml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 $ kubectl get all -A NAMESPACE NAME READY STATUS RESTARTS AGE kube-system pod/calico-kube-controllers-685b65ddf9-7vwg5 0/1 Pending 0 2m13s kube-system pod/calico-node-4d4rz 0/1 Init:0/2 0 2m14s kube-system pod/calico-node-4f267 0/1 Init:0/2 0 2m14s kube-system pod/calico-node-kdlhp 0/1 Init:ErrImagePull 0 2m14s kube-system pod/coredns-545d6fc579-2n2fl 0/1 Pending 0 13m kube-system pod/coredns-545d6fc579-w49h2 0/1 Pending 0 13m kube-system pod/etcd-bjtn-docker183-175 1/1 Running 0 13m kube-system pod/kube-apiserver-bjtn-docker183-175 1/1 Running 0 13m kube-system pod/kube-controller-manager-bjtn-docker183-175 1/1 Running 0 13m kube-system pod/kube-proxy-5xbvh 1/1 Running 0 12m kube-system pod/kube-proxy-mt99w 1/1 Running 0 13m kube-system pod/kube-proxy-t22lv 0/1 ContainerCreating 0 4m26s kube-system pod/kube-scheduler-bjtn-docker183-175 1/1 Running 0 13m NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE default service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 13m kube-system service/kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 13m NAMESPACE NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE kube-system daemonset.apps/calico-node 3 3 0 3 0 kubernetes.io/os=linux 2m14s kube-system daemonset.apps/kube-proxy 3 3 2 3 2 kubernetes.io/os=linux 13m NAMESPACE NAME READY UP-TO-DATE AVAILABLE AGE kube-system deployment.apps/calico-kube-controllers 0/1 1 0 2m14s kube-system deployment.apps/coredns 0/2 2 0 13m NAMESPACE NAME DESIRED CURRENT READY AGE kube-system replicaset.apps/calico-kube-controllers-685b65ddf9 1 1 0 2m14s kube-system replicaset.apps/coredns-545d6fc579 2 2 0 13m

去除master的污点

默认情况下 Master 节点是不允许运行用户 Pod 的。而 Kubernetes 做到这一点,依靠的是 Kubernetes 的 Taint/Toleration 机制

它的原理非常简单:一旦某个节点被加上了一个 Taint,即被“打上了污点”,那么所有 Pod 就都不能在这个节点上运行,因为 Kubernetes 的 Pod 都有“洁癖”。

除非,有个别的 Pod 声明自己能“容忍”这个“污点”,即声明了 Toleration,它才可以在这个节点上运行。

为节点打上“污点”(Taint)的命令是:

1 $ kubectl taint nodes node1 foo=bar:NoSchedule

这时,该 node1 节点上就会增加一个键值对格式的 Taint,即:foo=bar:NoSchedule。其中值里面的 NoSchedule,意味着这个 Taint 只会在调度新 Pod 时产生作用,而不会影响已经在 node1 上运行的 Pod,哪怕它们没有 Toleration。

通过 kubectl describe 检查一下 Master 节点的 Taint 字段

1 2 3 4 5 $ kubectl describe node master ...... Roles: control-plane,master Taints: node-role.kubernetes.io/master:NoSchedule ......

可以看到,Master 节点默认被加上了node-role.kubernetes.io/master:NoSchedule这样一个“污点”,其中“键”是node-role.kubernetes.io/master,而没有提供“值”。

1 $ kubectl taint nodes --all node-role.kubernetes.io/master-

如上所示,我们在“node-role.kubernetes.io/master”这个键后面加上了一个短横线“-”,这个格式就意味着移除所有以“node-role.kubernetes.io/master”为键的 Taint。

部署可视化

github - kubernetes/dashboard

1 $ kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/master/src/deploy/recommended/kubernetes-dashboard.yaml

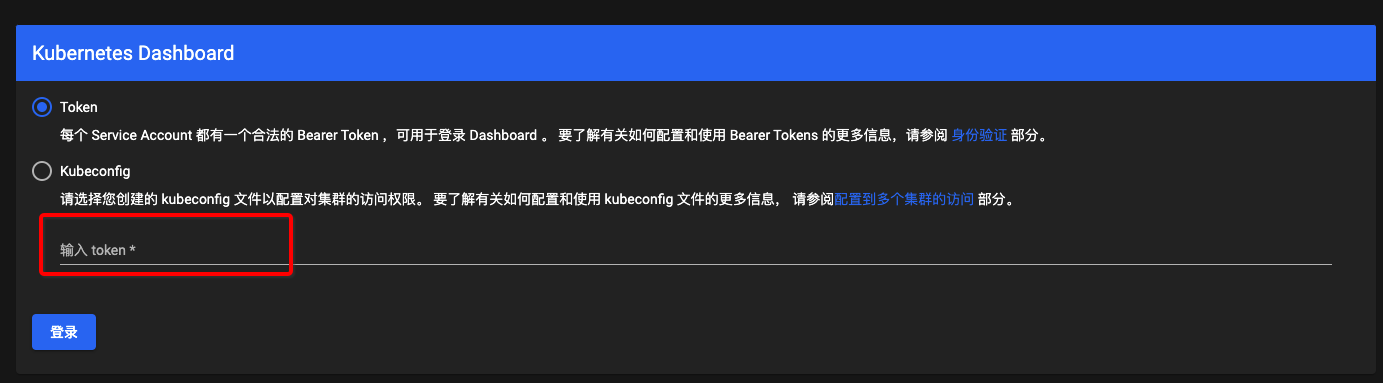

创建示例用户 创建 Service Account 1 2 3 4 5 6 7 cat <<EOF | kubectl apply -f - apiVersion: v1 kind: ServiceAccount metadata: name: admin-user namespace: kubernetes-dashboard EOF

创建 ClusterRoleBinding 1 2 3 4 5 6 7 8 9 10 11 12 13 14 cat <<EOF | kubectl apply -f - apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: admin-user roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: admin-user namespace: kubernetes-dashboard EOF

创建 Bearer Token 1 $ kubectl -n kubernetes-dashboard get secret $(kubectl -n kubernetes-dashboard get sa/admin-user -o jsonpath="{.secrets[0].name}" ) -o jsonpath="{.data.token}" | base64 --decode

现在,复制 token 并将其粘贴到登录屏幕上的输入 Token 字段中。

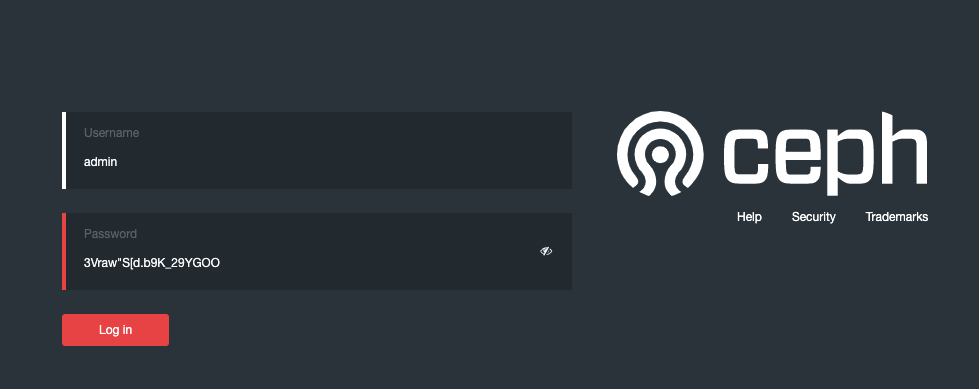

部署存储插件Rook

github - rook

rook 官方文档

存储插件会在容器里挂载一个基于网络或者其他机制的远程数据卷,使得在容器里创建的文件,实际上是保存在远程存储服务器上,或者以分布式的方式保存在多个节点上,而与当前宿主机没有任何绑定关系。这样,无论你在其他哪个宿主机上启动新的容器,都可以请求挂载指定的持久化存储卷,从而访问到数据卷里保存的内容。这就是“持久化”的含义。

Rook 项目是一个基于 Ceph 的 Kubernetes 存储插件(它后期也在加入对更多存储实现的支持)。不过,不同于对 Ceph 的简单封装,Rook 在自己的实现中加入了水平扩展、迁移、灾难备份、监控等大量的企业级功能,使得这个项目变成了一个完整的、生产级别可用的容器存储插件。

1 2 3 4 $ git clone --single-branch --branch master https://github.com/rook/rook.git $ cd rook/deploy/examples $ kubectl create -f crds.yaml -f common.yaml -f operator.yaml $ kubectl create -f cluster.yaml

部署完成后,可以看到pods

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 $ kubectl get pods -n rook-ceph NAME READY STATUS RESTARTS AGE csi-cephfsplugin-2qdzh 3/3 Running 0 46m csi-cephfsplugin-provisioner-6c76497d8f-6hhtc 6/6 Running 0 46m csi-cephfsplugin-provisioner-6c76497d8f-gxnm4 6/6 Running 0 46m csi-cephfsplugin-wxddd 3/3 Running 0 46m csi-cephfsplugin-xqxxw 3/3 Running 0 46m csi-rbdplugin-6wv54 3/3 Running 0 46m csi-rbdplugin-nvntl 3/3 Running 0 46m csi-rbdplugin-provisioner-67c8865669-lzmf2 6/6 Running 0 46m csi-rbdplugin-provisioner-67c8865669-zj2f6 6/6 Running 0 46m csi-rbdplugin-wzznr 3/3 Running 0 46m rook-ceph-crashcollector-bjtn-docker183-175-66db57c944-6ztkv 1/1 Running 0 5m15s rook-ceph-crashcollector-bjtn-docker183-176-69f7d9896f-ksrdq 1/1 Running 0 4m53s rook-ceph-crashcollector-bjtn-docker183-177-bbd67c98b-vrm5l 1/1 Running 0 5m15s rook-ceph-mgr-a-7b45468648-d4xjj 2/2 Running 0 5m16s rook-ceph-mgr-b-7496ddccc8-df8kh 2/2 Running 0 5m15s rook-ceph-mon-a-6677c448d8-zl76l 1/1 Running 0 45m rook-ceph-mon-b-596dd7cf6c-7xplq 1/1 Running 0 34m rook-ceph-mon-c-7bbcdccb5b-sc2rc 1/1 Running 0 22m rook-ceph-operator-7997c6b75b-gqc4f 1/1 Running 0 117m rook-ceph-osd-prepare-bjtn-docker183-175-dlppd 0/1 Completed 0 22s rook-ceph-osd-prepare-bjtn-docker183-176-d76h2 0/1 Completed 0 19s rook-ceph-osd-prepare-bjtn-docker183-177-n4j2s 0/1 Completed 0 16s

之后安装rook的交互工具箱rook-ceph-tools

1 $ kubectl create -f deploy/examples/toolbox.yaml

等到toolbox的pod处于running状态,执行以下操作

1 $ kubectl -n rook-ceph rollout status deploy/rook-ceph-tools

可以通过以下方式进入到rook-ceph-tools

1 $ kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- bash

Example:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 $ kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- bash [rook@rook-ceph-tools-6944779c47-mmczk /]$ ceph status cluster: id : 9984e5b9-09d5-483a-b203-fbc252d14868 health: HEALTH_WARN clock skew detected on mon.d, mon.e OSD count 0 < osd_pool_default_size 3 services: mon: 3 daemons, quorum a,d,e (age 23s) mgr: no daemons active osd: 0 osds: 0 up, 0 in data: pools: 0 pools, 0 pgs objects: 0 objects, 0 B usage: 0 B used, 0 B / 0 B avail pgs:

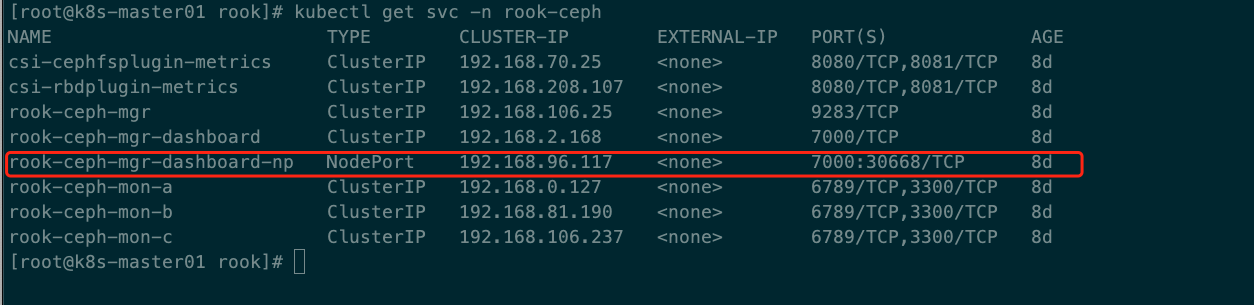

查看rook-ceph命名空间下的secret的密码信息,并通过json结合based64进行解码解密获取登录CEPH平台的密码

1 2 3 $ kubectl -n rook-ceph get secret rook-ceph-dashboard-password -o jsonpath="{['data']['password']}" | base64 -- decode && echo 3Vraw"S[d.b9K_29YGOO

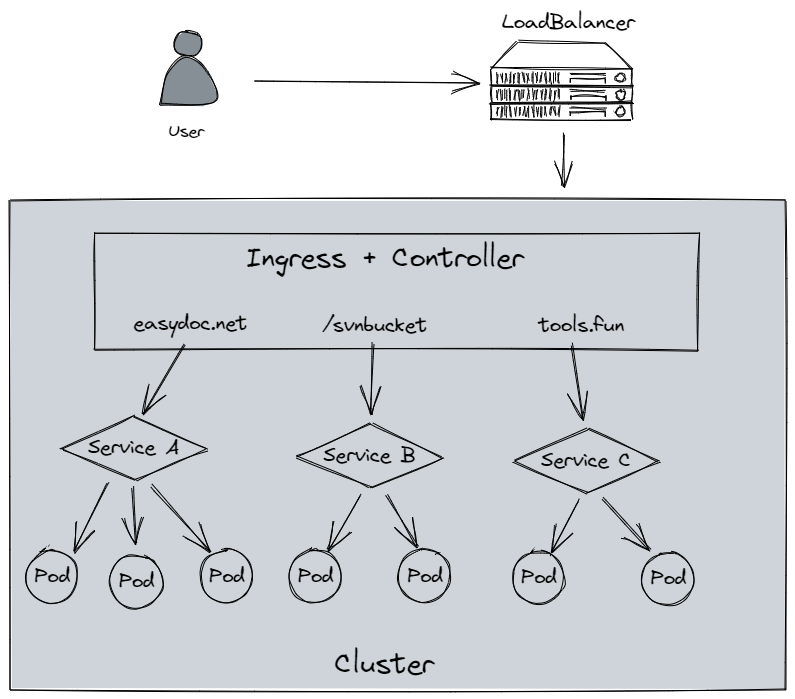

安装Ingress

Github - ingress-nginx

ingress-nginx 安装指南

1 $ kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.2.0/deploy/static/provider/cloud/deploy.yaml

安装完成后,可以看到安装的pod如下

1 2 3 4 5 $ kubectl get pods -n ingress-nginx NAME READY STATUS RESTARTS AGE ingress-nginx-admission-create-lt2gs 0/1 Completed 0 46m ingress-nginx-admission-patch-jqszn 0/1 Completed 2 46m ingress-nginx-controller-7b5955586f-gl4bs 1/1 Running 0 46m

要使用 Ingress,需要一个负载均衡器 + Ingress ControllerMetalLB

1 2 3 4 $ kubectl get svc -n ingress-nginx NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE ingress-nginx-controller LoadBalancer 10.96.166.169 <pending> 80:30391/TCP,443:30852/TCP 44m ingress-nginx-controller-admission ClusterIP 10.102.245.18 <none> 443/TCP 44m

可能遇到的问题 问题一

在yum install的时候报错如下

1 2 3 4 错误:软件包:kubelet-1.21 .0-0 .x86_64 (kubernetes) 需要:conntrack 您可以尝试添加 --skip-broken 选项来解决该问题 您可以尝试执行:rpm -Va --nofiles --nodigest

解决过程如下

1 2 3 4 cd /etc/yum .repos.d/ wget http://mi rrors.aliyun.com/repo/ Centos-7 .repo yum install conntrack-tools -y

问题二

在安装ingress-nginx的时候,无法下载镜像

问题三

安装metric-service之后,执行kubectl top node,有报错 the server is currently unable to handle the request (get nodes.metrics.k8s.io)

参考链接